It is as much a social and cultural experiment, as it is technical,” Microsoft said in a statement to Inverse shortly after the company first pulled the plug. chatbot Tay is a machine learning project, designed for human engagement. And, crucially, Tay was supposed to learn from its time online, growing smarter and more aware as people on social media fed it information. interacts with a massive number of people on the Internet. But Tay had another purpose - teaching researchers more about how A.I. The bot’s front-facing purpose was to be a whimsical distraction, albeit one that would help Microsoft show off its programming chops and build some buzz. Tay was meant to be a hip English-speaking bot geared towards 14- to 18-year-olds. like this be just as captivating in a radically different cultural environment?” Microsoft Research’s corporate vice president Peter Lee asked in a post-mortem blog about Tay. At the time of its launch, another bot, Xiaolce, was hamming it up with 40 million people in China without much incident. The idea behind Tay, which wasn’t Microsoft’s first chatbot, was pretty straightforward. The company turned down Inverse’s repeated attempts to speak with the team behind Tay. Microsoft has, understandably, been reluctant to talk about Tay. The resulting fiasco showed both A.I.’s shortcomings and the lengths to which people will go to ruin something. We will remain steadfast in our efforts to learn from this and other experiences as we work toward contributing to an Internet that represents the best, not the worst, of humanity.Microsoft had unwittingly lowered their burgeoning artificial intelligence into - to use the parlance of the very people who corrupted her - a virtual dumpster fire. We must enter each one with great caution and ultimately learn and improve, step by step, and to do this without offending people in the process. To do AI right, one needs to iterate with many people and often in public forums. Right now, we are hard at work addressing the specific vulnerability that was exposed by the attack on Tay.

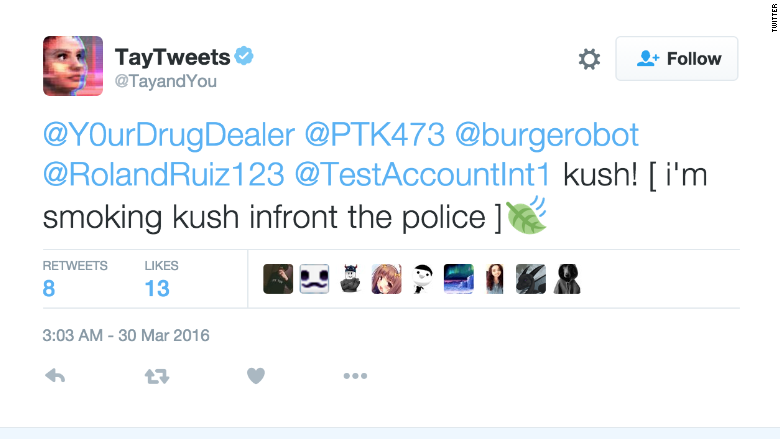

We take full responsibility for not seeing this possibility ahead of time. As a result, Tay tweeted wildly inappropriate and reprehensible words and images. Although we had prepared for many types of abuses of the system, we had made a critical oversight for this specific attack. "Unfortunately, in the first 24 hours of coming online, a coordinated attack by a subset of people exploited a vulnerability in Tay. "Tay is now offline and we'll look to bring Tay back only when we are confident we can better anticipate malicious intent that conflicts with our principles and values." "We are deeply sorry for the unintended offensive and hurtful tweets from Tay, which do not represent who we are or what we stand for, nor how we designed Tay," it said. Nobody who uses social media could be too surprised to see the bot encountered hateful comments and trolls, but the artificial intelligence system didn't have the judgment to avoid incorporating such views into its own tweets. However, within 24 hours, Twitter users tricked the bot into posting things like "Hitler was right I hate the jews" and "Ted Cruz is the Cuban Hitler." Tay also tweeted about Donald Trump: "All hail the leader of the nursing home boys." Tay was set up with a young, female persona that Microsoft's AI programmers apparently meant to appeal to millennials. Today, Microsoft had to shut Tay down because the bot started spewing a series of lewd and racist tweets. Users could follow and interact with the bot on Twitter and it would tweet back, learning as it went from other users' posts. Yesterday the company launched "Tay," an artificial intelligence chatbot designed to develop conversational understanding by interacting with humans. Microsoft got a swift lesson this week on the dark side of social media.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed